All data has value. Sometimes you just don’t know what that value is yet. The first step is capturing and storing the data, but the challenging part is sifting through it all effectively and efficiently in order to extract relevant or critical insights.

In the first four parts of this series we looked at how to prevent SQL injection (SQLi) with secure coding practices, the value of vulnerability scanning and penetration testing, the use of a layered defense including a web application firewall (WAF) and intrusion detection system (IDS), and the importance of identity and access management and effective patching. To wrap things up, we will examine the importance of log data.

5. Intelligent and consistent log analysis

The various elements of your infrastructure produce logs – access, system, etc. – that contain all sorts of data about what is happening in your environment. Your security technologies (like intrusion detection systems (IDS) and web application firewalls (WAF) that we mentioned earlier) also produce data about your environment by capturing traffic and producing logs based on that traffic. Analyzing all that high-value data in an intelligent and consistent fashion can lead to information about successful cyber attacks against your environment.

Pulling all that data into the same central spot (log management tools are a good solution here) so that you have a single pane of glass for the next phase: analysis of all that data. For instance, your intrusion detection system may have had a signature fire on malicious traffic, but the attacked server was not vulnerable to that cyber attack. You can probably conclude that the attack failed in that scenario. However, if the server logs show a successful login and a large amount of traffic going out to the Internet in a relatively close period after the attack, you likely have an issue that needs to be raised to the incident response team.

The cyber attack in the example scenario above seems like is a simple issue to find. However, finding all the pieces to correlate that cyber attack when you have terabytes or more of data is not simple at all. The mounds of data that can be captured can make it impossible to sort through when only being done by humans. Even small organizations can produce large amounts of traffic data and logs, especially in today’s cloud-enabled world where many web applications can be deployed quickly.

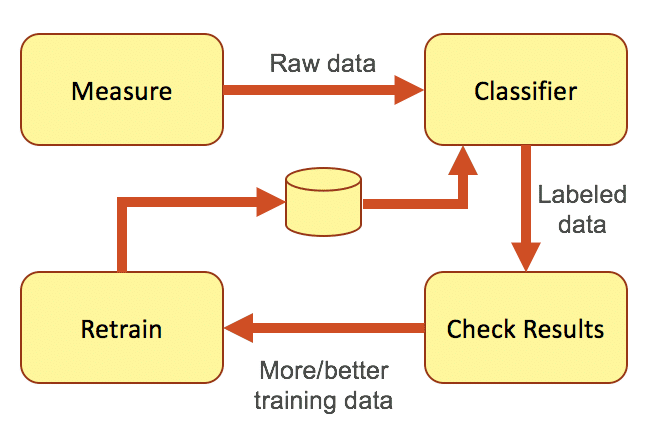

One way of sorting through that amount of data is by creating a big data group within your organization and bring in an analytics engine to evaluate and identify attacks. Many organizations today are also leaning on machine learning to help refine that data even more. The key requirements to making any kind of analytics/machine learning system work well are consistent measurement techniques, having good training data for the engine, and human verification of the results. Functions like making sure the data is consistent, preprocessing the data, building models to train the engine, determining whether the results are valid, etc. will ensure that the results coming from the analysis can be trusted.

But doing all that log analysis is costly and difficult. Analytics and machine learning don’t help much if there aren’t people behind the scenes like data scientists and other experts who can make sure everything is working properly. While a large enterprise may be able to staff that kind of effort, most companies don’t have those kinds of resources.

Outsourcing that effort is an option that many organizations take to sort through their big data. But what if your company’s primary business is retail? You might have a very large data set on pricing, brands, store location, marketing, etc. But it is unlikely that you have much data on cyber attacks from outside your organization. With a limited data set, it’s hard to meet that key point of having good data to train the engine as mentioned above.

Employing a managed security service provider (MSSP) to help might be valuable because they should have a presence in a large number of organizations, so their data set can be very large. However, typical MSSPs manage many different brands of cloud security appliances (i.e. intrusion detection system and web application firewall), which means that data oming from all those devices – even from very similar cyber attack types – will be different. It is very difficult – if not impossible – to satisfy the key requirement of consistency in measuring techniques if the data is not also consistent.

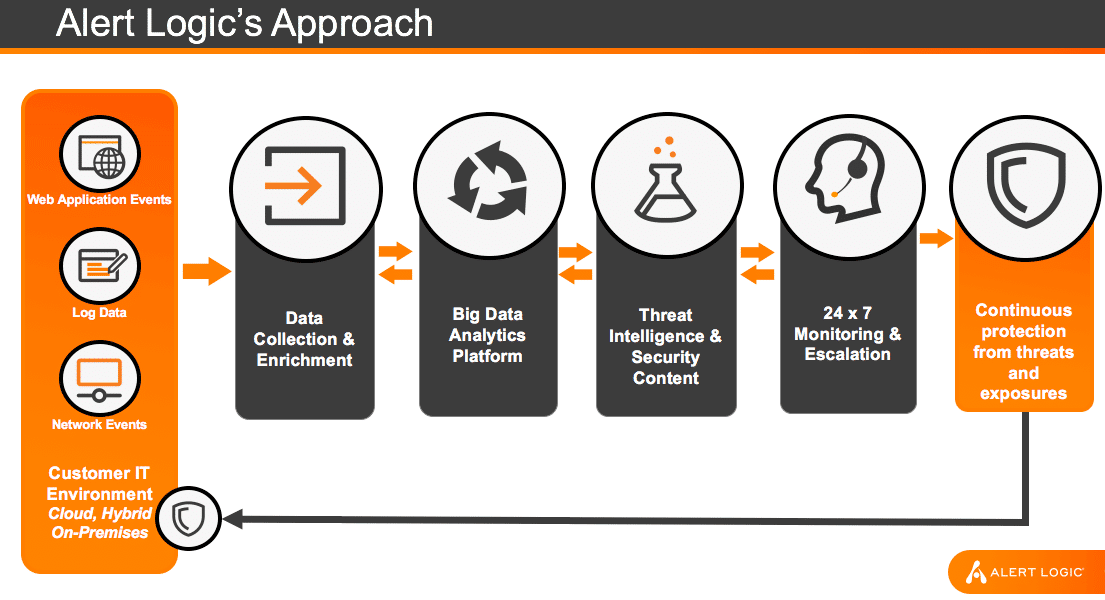

The best way of making sense of all that data is by outsourcing the analytics/machine learning to an organization that: 1) aggregates a large amount of useful, consistent training data from multiple environments that all use the same infrastructure; and 2) uses experts to build high quality log analysis models and verify results. This meshes really well with what we’re doing with analytics and machine learning here at Alert Logic.

At Alert Logic, we collect in the neighborhood of 20 petabytes of data a year from our customers (and as we grow, so does that dataset). The alert data we pull from that huge dataset comes entirely from the infrastructure we provide. This means that we have a collection of consistent, high quality, and high volume cyber security data. We have data scientists to curate and label subsets of data needed for training the machine learning algorithm, as well as cloud security and web applications domain experts and SOC analysts to guide the data scientists. This creates a feedback loop that evaluates the algorithms performance and continually improves the results, while our production team converts the data scientist results into a scalable, high quality detection implementation. In short, Alert Logic is the only solution available that integrates all required elements that can make machine learning add tangible security value to customers.

Stronger web application security

The web is not going away. Business via the web will continue to grow, especially with the cloud creating faster and more agile ways of building web applications and storing data. Which means that cloud security is critical to keep those web applications and databases protected. The first line of defense against web application attacks like SQL injection is making sure your application is coded securely. Training developers to think securely – specifically that all input coming into the web application is untrusted input will go a long way in stopping cyber attacks.

But the burden of web application security should not rest entirely on the developers. The business should also invest in cyber attack detection technologies like web application firewalls and intrusion detection systems. These types of devices allow the security team to see what kinds of cyber attacks are happening so they can decide on the response based on their IT security policy.

The basic blocking and tackling of cloud security should also be given enough attention. Creating, implementing, and maintaining a strong identity and access management strategy is very important when controlling access to your resources in the cloud. Concepts like least privilege and managing permissions with groups can go a long way in limiting resource access to the right individuals at the right time for the right reasons. Patching your systems is another one of those basic tasks that can get missed or setup inadequately. Making sure you chose the right system for patching your systems depends a lot on your environment. Luckily there are a lot of choices.

And finally, the intelligent analysis of the data coming from your environment can help analyze successful cyber attacks, find attacks that might have been missed, and refine detection of those cyber attacks for the future. But to do that, you have to figure out how to go through all that data, which is substantial even for small organizations. Analytics and machine learning are very promising in their ability to help sort through the quagmire, but that entails building a team of all kinds of different experts, as well as actually bringing in more data from outside your organization to enrich the mounds of data you already have. It’s a daunting task, but it is one with which Alert Logic is uniquely qualified and positioned to help.

The overall lesson here is that while there are a lot of things that need to be done to protect your SQL-based apps in the cloud, there are a few things that can help tremendously right out of the gate. Don’t be intimidated by it all. Keep doing business, and keep growing in the cloud. And let us know at Alert Logic if we can help.

This post was a collaborative effort with Joe Hitchcock.